Deqing Fu

This is Deqing Fu and I’m a fourth-year Ph.D. candidate in Computer Science at the University of Southern California (USC). My main research interests are deep learning theory, natural language processing, and the interpretability of AI systems. I’m (co-)advised by Prof. Vatsal Sharan of USC Theory Group and Prof. Robin Jia of Allegro Lab within USC NLP Group, and I’m working closely with Prof. Mahdi Soltanolkotabi and Prof. Shang-Hua Teng. During my Ph.D. studies, I spent time at Google and Meta as a student researcher. Before USC, I completed my undergraduate degree in Mathematics (with honors) and my master’s in Statistics at the University of Chicago.

My research focuses on understanding large language models from algorithmic and theoretical perspectives, as well as developing practical methods in interpretability, synthetic data generation, and multimodal learning. You can find my publications on Google Scholar and my recent CV here.

Algorithmic Perspectives on Large Language Models

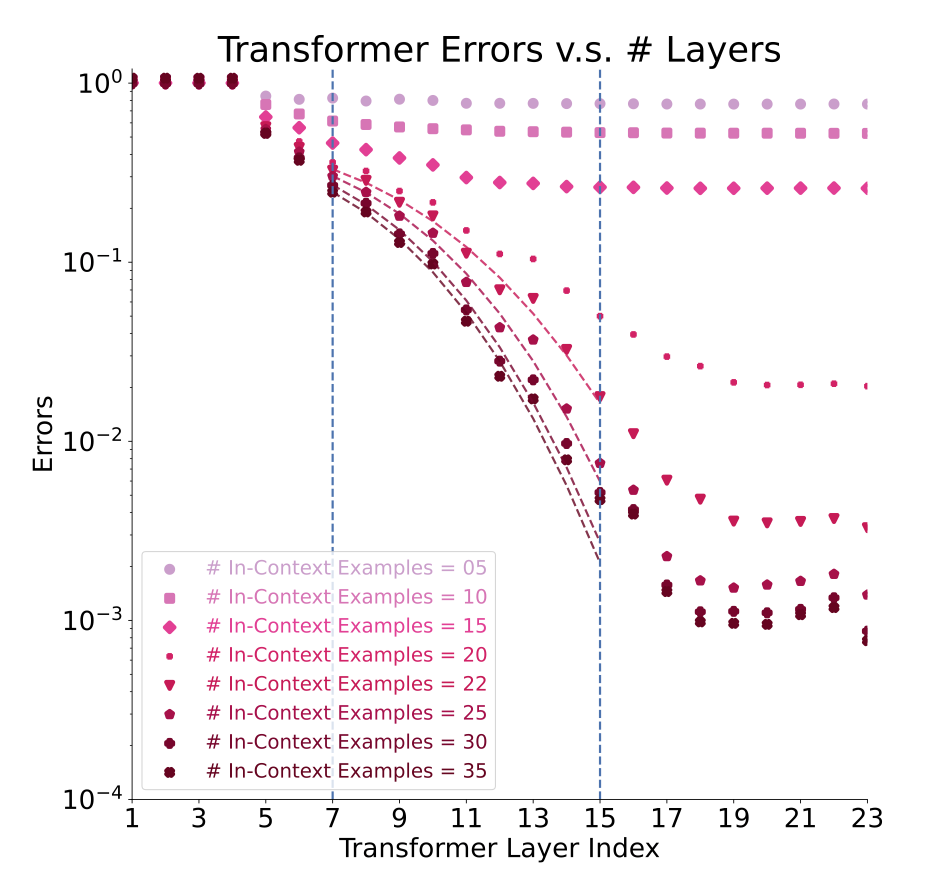

- Can Transformers learn algorithms simply from data? (NeurIPS 2024, ICML 2026)

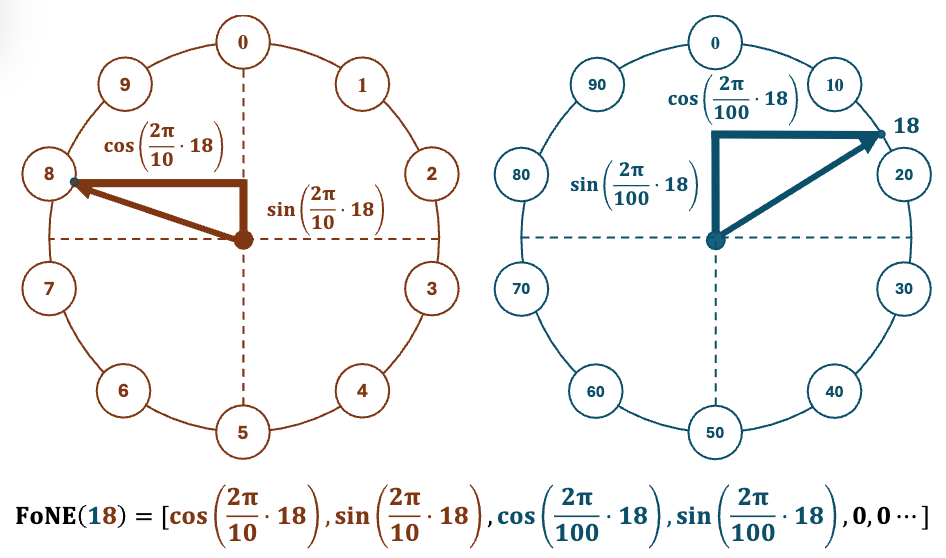

- Arithmetic in pretrained LLMs: memorization vs. mechanisms? (NeurIPS 2024, ICLR 2026)

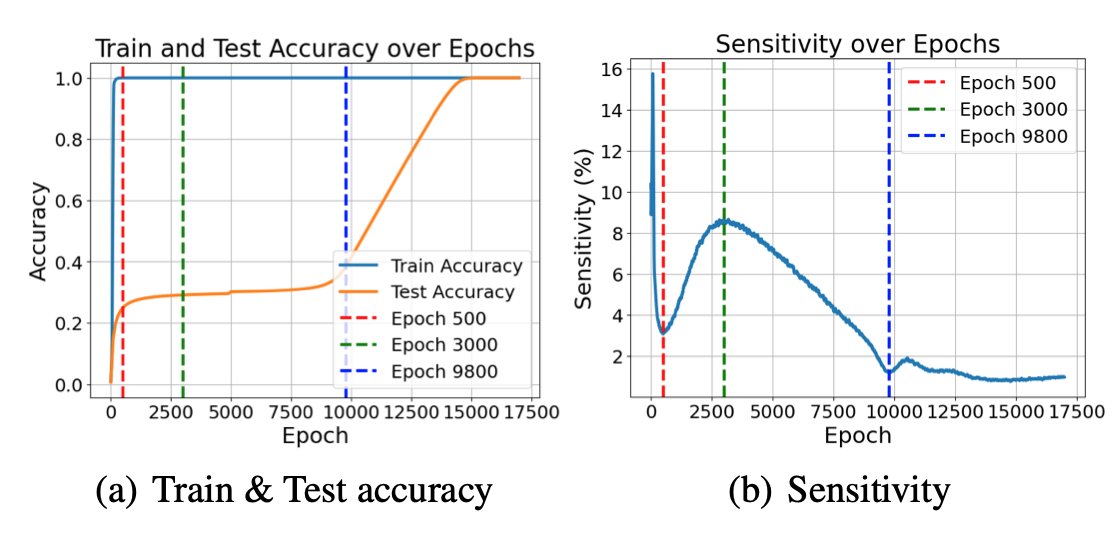

- What distinguishes Transformers from other architectures? (ICLR 2025)

Interpretability and Alignment

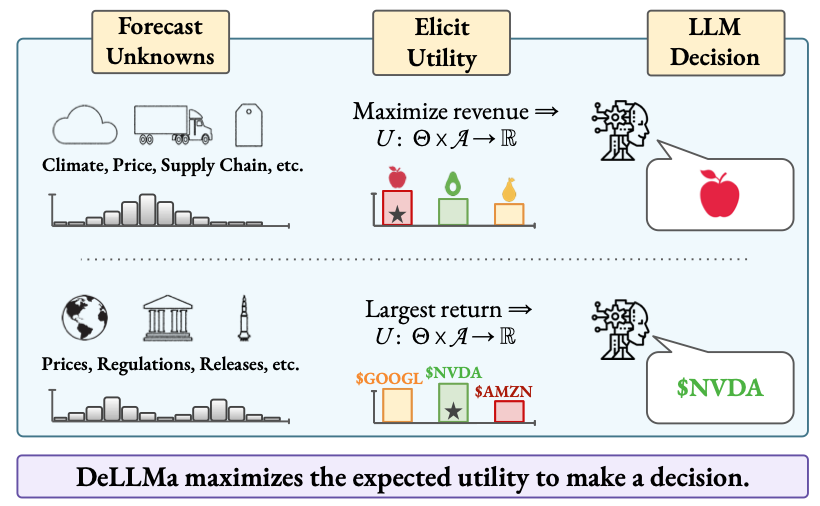

- Decision theory for LLM reasoning under uncertainty (ICLR 2025 Spotlight, arXiv 2026)

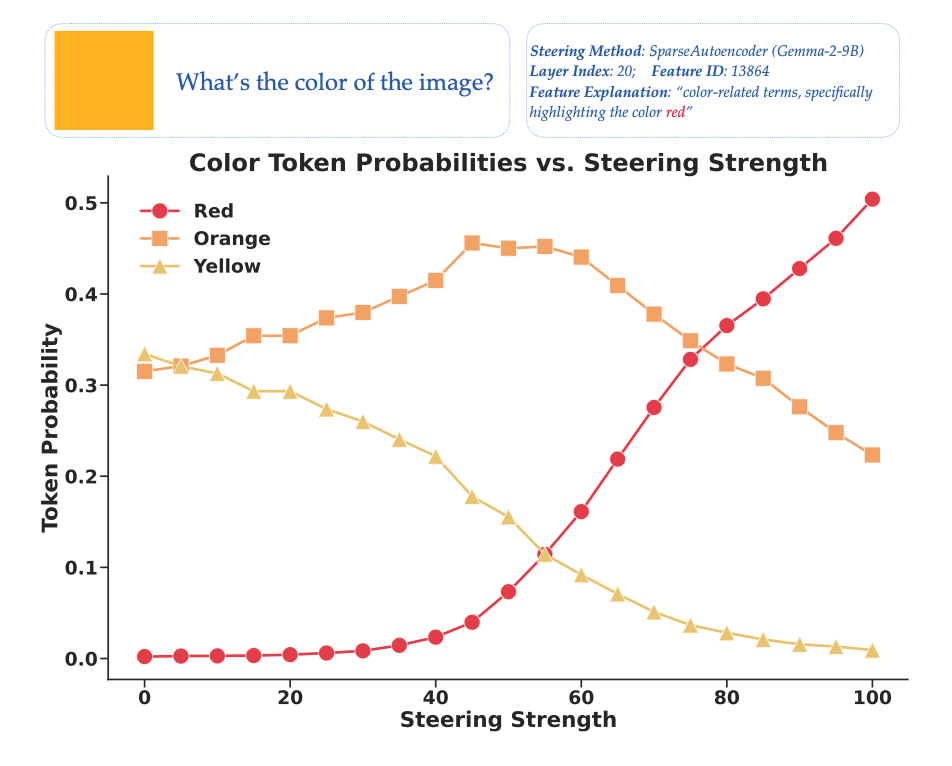

- Steering vectors for improved visual understanding (ACL 2026), and for efficient and privacy-preserving synthetic data generation (ICML 2026)

- Mechanistic interpretability via SAEs and transcoders (arXiv 2025, Tech Report)

Multimodal Models and Applications

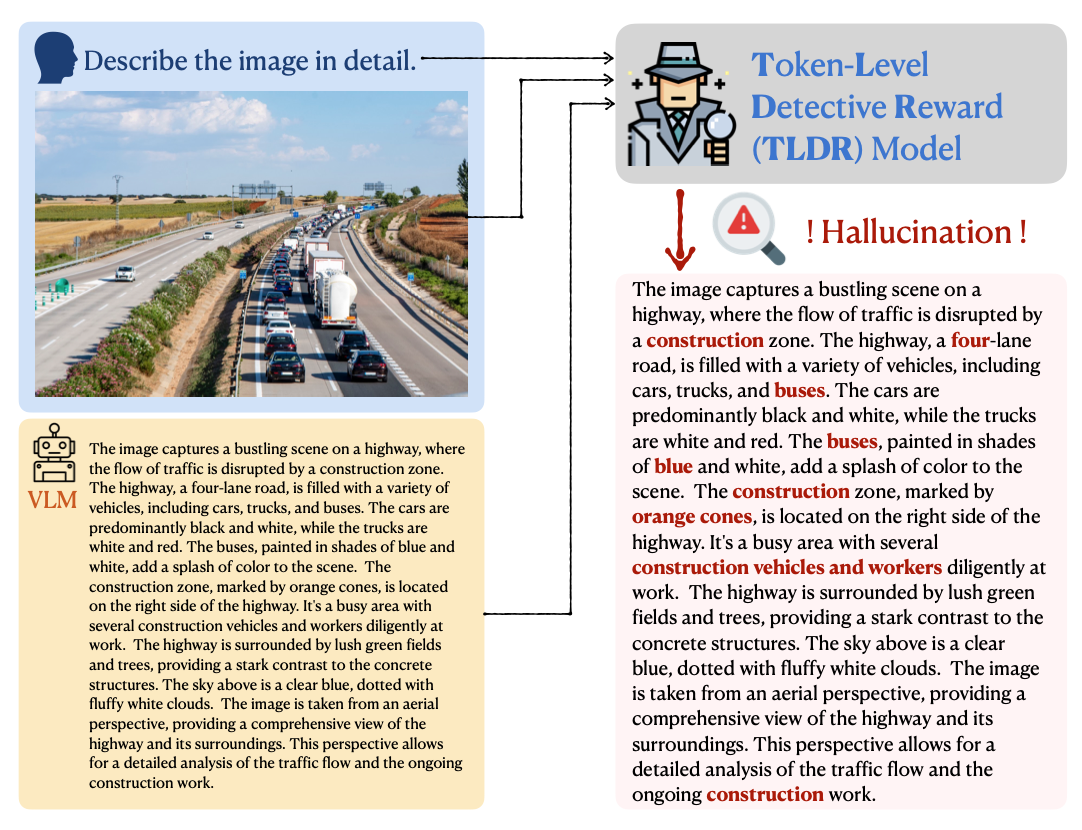

- Multimodal rewards for improving generation quality: token-level hallucination reduction (ICLR 2025) and Text-to-Image alignment (NAACL 2025)

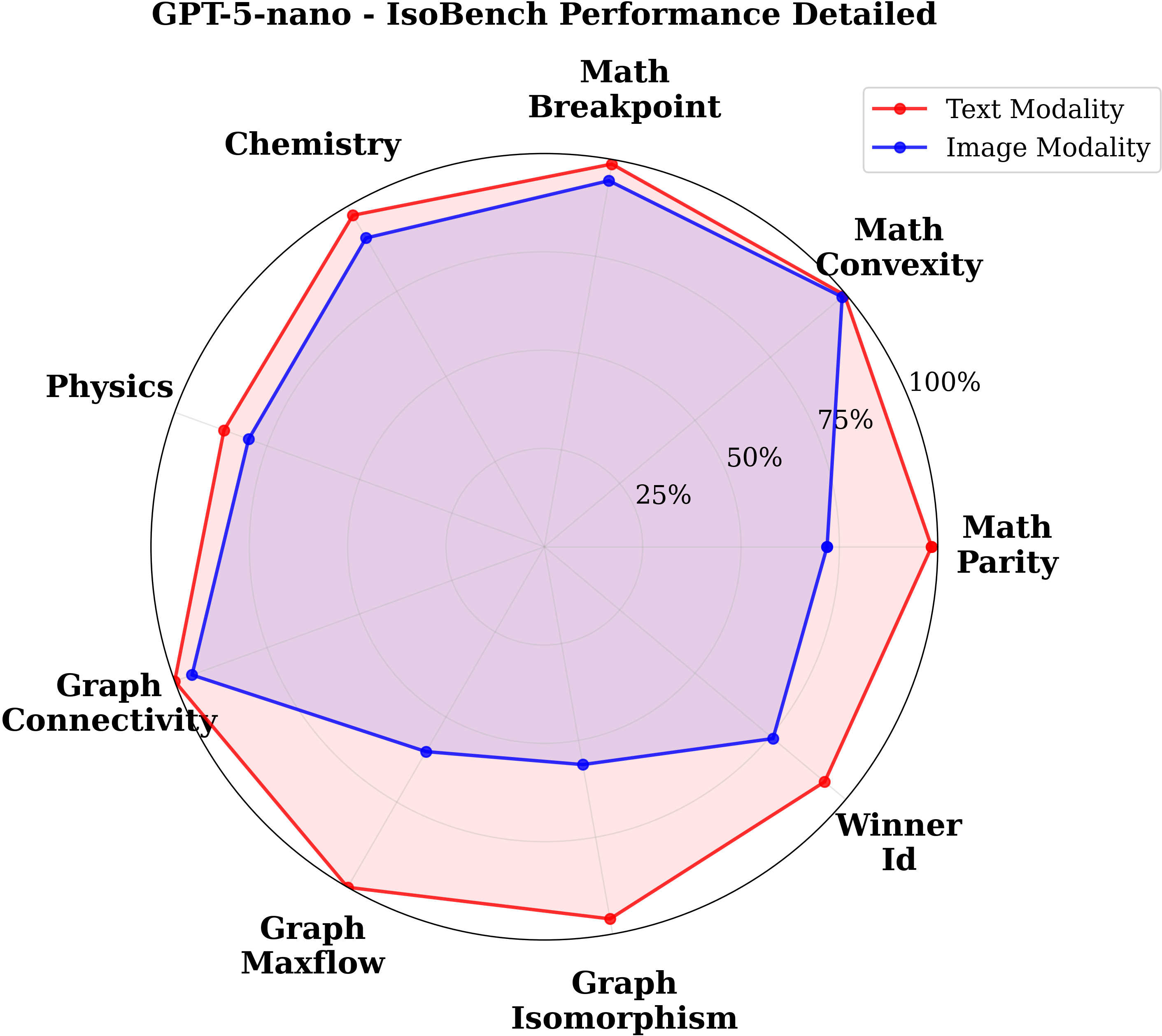

- Modality sensitivity in Multimodal LLMs (COLM 2024)

- Large-scale dataset for visual reasoning with images (ICLR 2026)

News

| Apr 30, 2026 | Two papers (EPSVec and Transformers Learn Graph Connectivity) accepted to ICML 2026. |

|---|---|

| Apr 22, 2026 | New preprint: Convergent Evolution: How Different Language Models Learn Similar Number Representations. See the website and blog post. |

| Jan 31, 2026 | New preprint: EPSVec: Efficient and Private Synthetic Data Generation via Dataset Vectors. |

| Jan 26, 2026 | Two papers (FoNE and Zebra-CoT) accepted to ICLR 2026. |

| Oct 22, 2025 | New preprint: Transformers Provably Learn Algorithmic Solutions for Graph Connectivity, But Only with the Right Data. |

Selected Publications

See full list or Google Scholar for all publications.

2026

2025

- ICLR

TLDR: Token-Level Detective Reward Model for Large Vision Language ModelsIn International Conference on Learning Representations (ICLR), 2025

TLDR: Token-Level Detective Reward Model for Large Vision Language ModelsIn International Conference on Learning Representations (ICLR), 2025 - ICLR

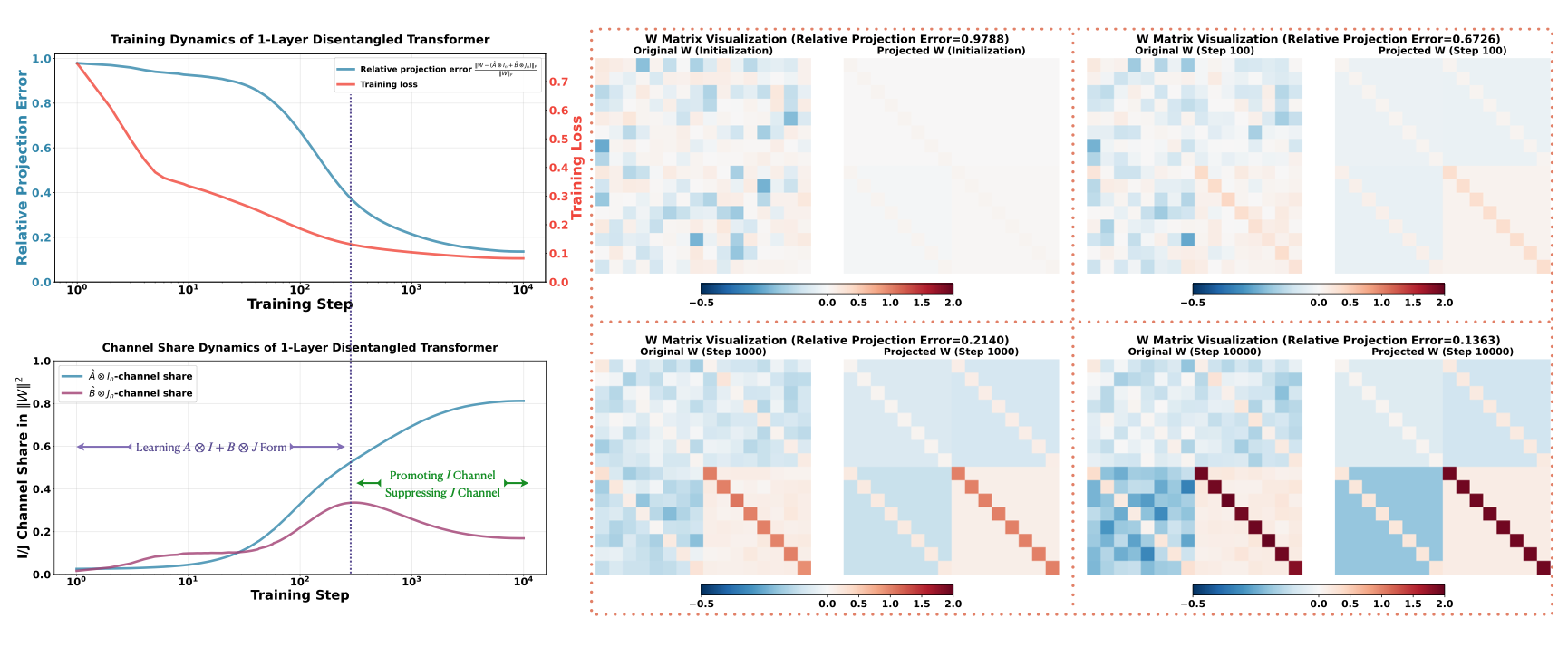

Transformers Learn Low Sensitivity Functions: Investigations and ImplicationsIn International Conference on Learning Representations (ICLR), 2025*Equal Contribution

Transformers Learn Low Sensitivity Functions: Investigations and ImplicationsIn International Conference on Learning Representations (ICLR), 2025*Equal Contribution - NAACL

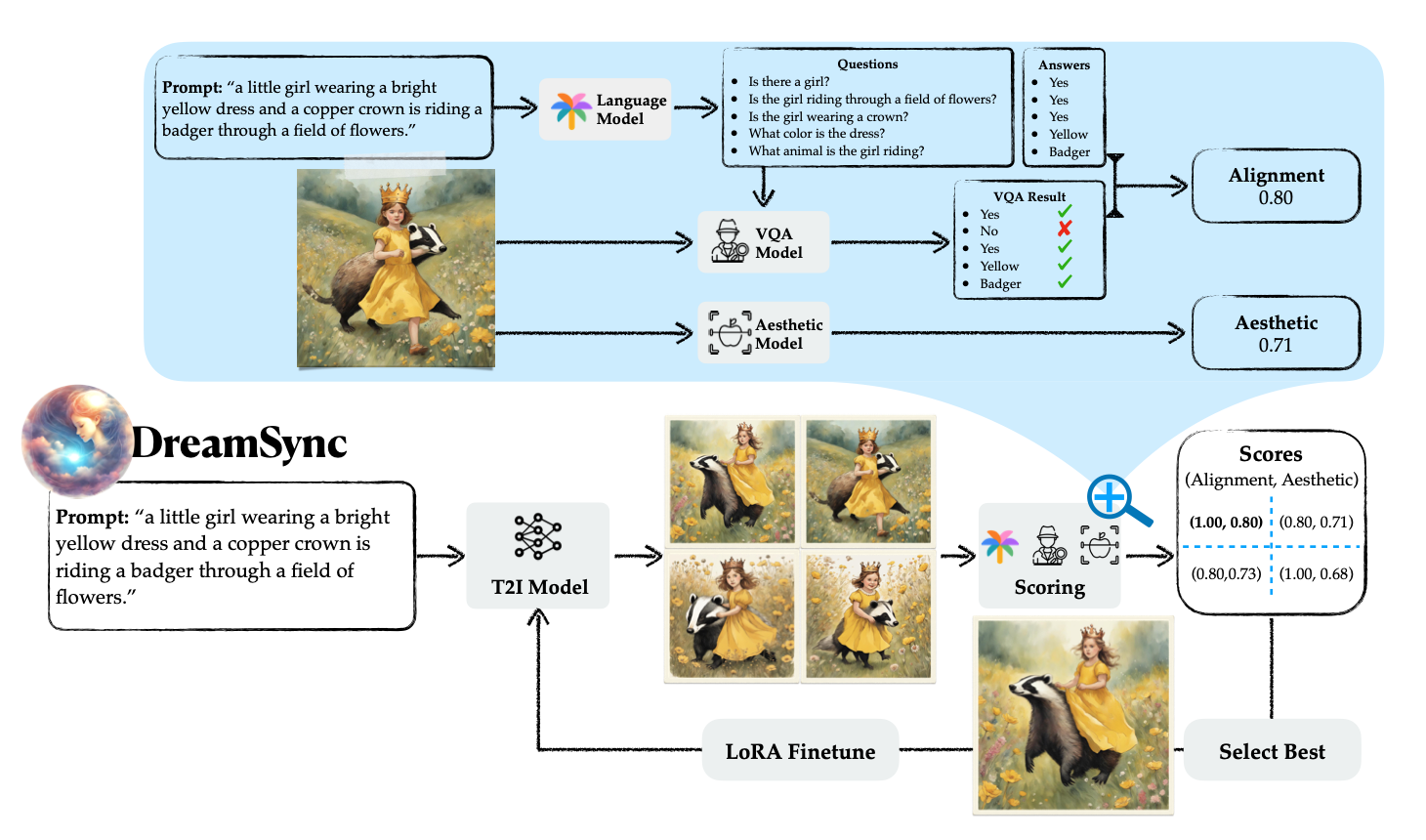

DreamSync: Aligning Text-to-Image Generation with Image Understanding FeedbackIn Annual Conference of the North American Chapter of the Association for Computational Linguistics (NAACL), 2025*Equal Contribution

DreamSync: Aligning Text-to-Image Generation with Image Understanding FeedbackIn Annual Conference of the North American Chapter of the Association for Computational Linguistics (NAACL), 2025*Equal Contribution

2024

- NeurIPS

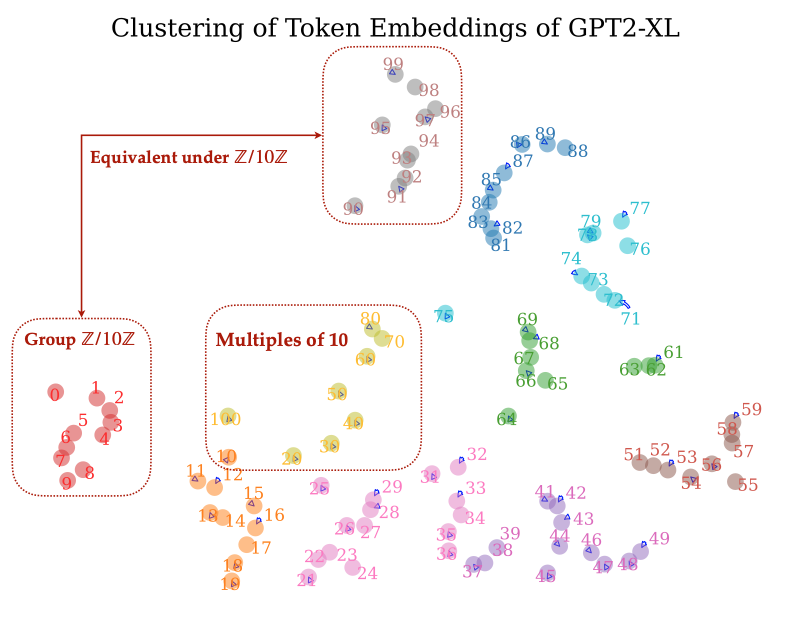

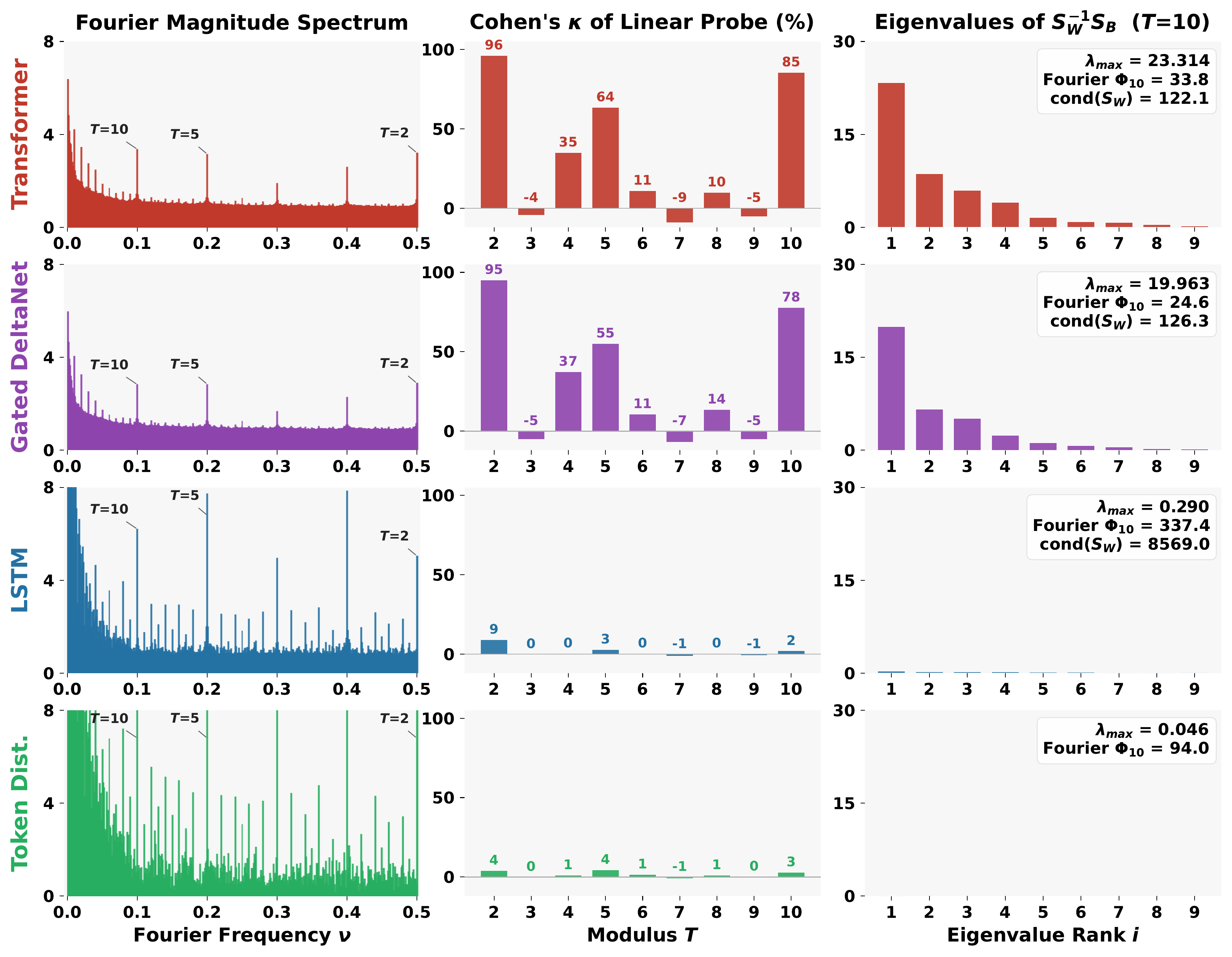

Pre-trained Large Language Models Use Fourier Features to Compute AdditionIn Conference on Neural Information Processing Systems (NeurIPS), 2024

Pre-trained Large Language Models Use Fourier Features to Compute AdditionIn Conference on Neural Information Processing Systems (NeurIPS), 2024

Dataset

Dataset

Code

Code